Category: Corporate Visionaries

Governing Intelligence: Snigdha Bhardwaj on Building AI Systems Billions Can Trust

The internet’s center of gravity is shifting; from retrieving information to generating it in real time. In that shift, the defining question is no longer access, but accountability: who governs what billions of people now read, trust, and act on? At Google, Snigdha Bhardwaj is building the systems that answer it, embedding safety, judgment, and restraint into technologies that cannot be fully predicted. As generative AI becomes infrastructure, she argues, the winners of this era will not be those who scale fastest, but those who earn trust at scale.

In 2025, the global economy processed more AI-generated responses than financial transactions. That shift happened subtly. While markets tracked GDP and employment, a parallel infrastructure emerged where machines create information rather than simply retrieve it. 14 billion queries flow through search systems per day Increasingly, those queries get answers synthesized in real time.

This infrastructure now underpins how businesses make decisions, how students learn, how governments communicate. When analysts research markets, when teachers answer questions, when doctors review symptoms, AI-generated content shapes the outcome. The systems producing those responses have become as fundamental as electricity grids or payment rails.

But the internet is reorganizing around a different question. For 25 years, the question was: "How do you find what exists?" Now it's: "How do you govern what gets created?"

That shift defines the next era of technology showdown. Google, Microsoft, Meta, Amazon, and emerging AI labs compete on whose governance frameworks earn enough trust to become default infrastructure. Markets worth trillions will flow toward platforms that solve this problem. Those that don't will face regulatory intervention that reshapes their business models.

Snigdha Bharadwaj operates at the very core of that contest. As Director of Search and Generative AI Trust Strategy at Google, she's building governance systems for technology that didn't exist three years ago. Her frameworks influence and guide the industry leading approaches in the world of Gen AI.

"The companies that figure out responsible scaling will own the next platform shift," she observes. "Those that don't will become cautionary tales."

This isn't abstract future-gazing. The divergence is happening now. Some platforms are pulling back from generative features after safety failures. Others are accelerating. The gap between leaders and laggards compounds quarterly. Within five years, the winners will be obvious.

Learning Systems Thinking at Infosys

Snigdha spent over a decade at Infosys, one of India's pioneering IT services companies, working her way up to Principal Consultant. The work took her across continents and industries: financial services in one of the largest financial institutes in Paris, manufacturing and energy across the United States, retail operations in London.

Infosys built its reputation on delivering complex technology transformations at scale. That environment taught her to see organizations as systems. How governance structures hold up under pressure. How incentives shape behavior across thousands of people. How small design choices ripple through large organizations.

"Consulting forces you to understand systems fast," she says. "You walk into complex organizations, diagnose what's broken, and propose solutions that executives will actually implement. You don't get months to figure it out."

That discipline proved essential when she moved to platform technology. The problems got bigger. Billions of users instead of thousands of employees. The thinking stayed the same. Understand the system. Find the breaking points. Build solutions that scale.

When she saw an opening at Google for trust and safety work, she knew almost nothing about the field. She applied because it was unfamiliar. And that unfamiliarity intrigued her. She joined in 2015. Within a decade, she went from managing search quality in India to leading global strategy for AI safety.

From Retrieval to Generation

Traditional search works by retrieval. Type a query. The system finds relevant pages, ranks them by authority and relevance. You click through to sources. Responsibility is shared across the platform, the website, and the user.

Generative AI creates. The system produces original responses. Users read them as definitive answers. If something's wrong, the accountability lies with the platform. .

"You're no longer just pointing users to information," Snigdha explains. "You're producing a response that carries weight. That changes accountability fundamentally."

The technology itself is probabilistic. Transformer models predict word by word. You can't guarantee what comes out because the system operates on statistical patterns. Billions of parameters interact in ways that resist complete control.

When the technology itself is so unpredictable because you never know what's the next word the LLM is going to predict, it's really important to understand that when you're creating these user journeys, you have to test it out yourself.

Her team handles safety across Google's generative products: AI Overviews, AI Mode, Gemini, NotebookLM. They stress-test features before launch. They run adversarial red teaming trying to break the system. They track what's happening across the industry and learn from failures everywhere.

"You can't figure out a solution for everything," she notes. "Jailbreaks are a normal research problem everybody faces. But you need to know your limitations. You need to keep your eyes and ears open, proactively detecting bad behaviors."

Her guiding principle: build safety into the product from the start. Design for edge cases upfront. Test extensively before shipping.

You have to red team and stress test whatever you've built. You always have to think about the longer term. You need to use your resources as they are precious.

The Credibility Equation

Warren Buffett once said it takes decades to build a reputation and five minutes to ruin it. For global platforms, five minutes is generous.

"If you really think about that as a concept," Snigdha notes, "you might be doing things very differently because you could have worked really hard towards something but then it's like that. All big platforms operate under intense scrutiny, where an individual press incident or social media controversy can quickly shift the narrative away from a team's collective achievements."

She thinks about credibility across three dimensions: accountability, user voice, and continuous improvement.

Trust usually isn't about being perfect. It's about whether you feel accountable for the actions, for the products which you're introducing in the market, for the features you're making available to users, for the controls you're giving in users' hands. You need to be owning that narrative. You have to be honest about your imperfections.

The metrics that matter aren't always obvious. High numbers of content removals might look good in a dashboard, they could signal your proactive systems are failing.

"Metrics can give you comfort," she observes, "but comfort is not the same as truth."

Better metrics: How often does a problem occur in the first place? How fast can you detect it? How quickly can you fix it at scale?

The best signal is often silence. No escalation. No crisis. When systems work correctly, there's nothing to report.

Navigating Without Precedent

Innovation moves faster than regulation. You can't write rules for technology that doesn't exist yet. This creates gaps that someone has to fill with judgment.

When there isn't a clear rulebook, you fall back on your principles.

Her products are used by six-year-olds asking voice assistants to play songs. They're used by elderly people making financial decisions who need authoritative information. That spectrum of vulnerability shapes every design choice.

She sees regulation as a forcing function. Think about Formula 1 racing. Every season introduces new technical constraints. Those constraints don't tell teams how to engineer solutions. They define boundaries. Teams compete to build the fastest car within those boundaries.

"I just feel about regulation as something which defines the standards for us but also pushes your boundaries," she says. "It helps you to innovate. It helps you to find the right balance between what you can deliver and what society needs."

Speed Versus Durability

Every company faces the same pressure: ship first, capture the market, scale quickly.

Snigdha pushes back. "A fast car is useless until the brakes are reliable."

Google wasn't first to launch email. Yahoo was. But Google kept improving Gmail, and kept solving problems users actually had. Eventually Gmail became the standard.

"For us, whenever in Google we've made a decision, we've never slowed down to stop," she explains. "We slow down to ensure that we arrive at a place safely."

She describes this as Sundar Pichai's philosophy: "Bold and responsible. That's a narrative which Sundar really stands for."

She returns to the image of flying a kite. Wind provides lift. That's innovation, creativity, freedom to explore. The string is discipline, boundaries, protection. Both are necessary for sustained flight.

"If you're flying a kite, it needs the wind to fly, which is openness," she explains. "But then at the same time you have to have a string which will help it not drift away. You're still setting boundaries."

Organizations that sacrifice safety for speed pay eventually through regulatory fines, user attrition, or reputational damage.

Global Scale, Local Context

Platforms deploy globally overnight. Cultural norms don't travel that fast.

What's acceptable in one country may be harmful in another. Thailand has laws protecting the monarchy. India has social realities around caste. Content that serves users well in San Francisco might cause problems in Jakarta or Chennai.

"You need to have global values but they need to embed local context," Snigdha says. "You need to design that. You need to think about what values are really universal: integrity, standing up for the user, helpfulness. That's universal. But how it's expressed in a society, in a village in India versus a city in America, differs."

Her teams consult regional experts before entering new markets. They map legal requirements against internal standards. They study historical context shaping how different populations interpret content.

Competing Priorities

Regulators want transparency. Commercial partners want growth. Users want safety and privacy. These interests conflict regularly.

"While everyone deserves a voice, the most vulnerable should often get priority," she explains. "When regulators are demanding data, partners are pushing for growth, and users are seeking safety and privacy, you need to be able to navigate these tensions by centering the human at the end of the wire."

Her hierarchy is explicit: User safety comes first. Platform integrity embedded in its strong principles comes second. Any other consideration comes third.

You need to be able to prioritize the safety of the individual over the convenience of the situation or convenience of the institution and organization you're part of.

Growing up as the eldest daughter in a joint family in Bhopal taught her this instinct early. "If you're elder in the family, then you're informally sort of playing that role, which could mean you're ensuring harmony, you are protecting your younger siblings, you're maintaining the trust which your parents might have placed in you."

Active Stewardship

A big question persists: Should platforms be neutral infrastructure or active stewards?

Transparency is not often about telling everything. It is about meaningful disclosure.

Platforms can be transparent about intentions while protecting specific technical defenses. "You have to be fiercely protective about specific technical signals and defenses," she explains, "because if you reveal them, then there are malicious actors and they could harm the very users which we are serving."

Safety becomes a prerequisite for speech. When harassment dominates a platform, people without power to withstand it simply leave. The platform becomes less diverse, less valuable, less representative.

The Opportunity Ahead

While much conversation centers on AI risks, Snigdha maintains equal focus on what's possible.

"I would like to inherit a digital world that reflects the values of what my generations would have taught me, thinking that truth matters, it's our greatest responsibility to look after one another," she reflects.

The same systems requiring careful governance can democratize capabilities previously accessible only to the wealthy or well-connected. Language translation can bridge educational gaps. Accessibility tools can empower users with disabilities. Information synthesis can reduce research friction for professionals worldwide.

"I just hope that we have a world where technology is an equalizer and not a divider," she says. "Where future generations should be able to have the world's information at their fingertips without fearing for their safety, their privacy."

For young leaders entering this space, her advice is direct: Choose curiosity over comfort.

“That curiosity of 'I don't know anything about trust and safety' to 'oh my god, I'm interested in this' to 'oh my god, there's so much in this field that I never knew about being outside of Google' to how do you become industry leader in some of these areas. It just kept unraveling itself to me."

She emphasizes long-term thinking in an industry that rewards speed.

You're building infrastructure that will outlast you. Design accordingly.

Leadership Principles

Build in safety from the start. Protection added later is always weaker than protection designed into foundations.

Be honest about limitations. Credibility comes from acknowledging constraints openly.

Navigate by principles when rules don't exist yet. Your values become your guide when precedent doesn't exist.

Use constraints to force better thinking. Boundaries define space where creativity works best.

Optimize for durability over speed. Long games determine ultimate outcomes.

Scale globally, adjust locally. Universal principles need contextual expression.

Protect vulnerable users first. When you design for the most at-risk, you create safety for everyone.

Measure what doesn't happen. The crisis that never occurs is the real success metric.

Act, don't just observe. Neutrality that enables harm is complicity.

Build for what lasts. Present choices embed assumptions that future generations inherit.

Building for the Long Term

Systems reach billions before their consequences are fully understood. In this environment, the leaders who matter most understand that today's choices become tomorrow's constraints.

The best work in this field is invisible. When safety systems function correctly, users never think about them. They search, they learn, they create, they transact. The infrastructure stays hidden.

The most profound signal of a working defense is often the absence of a headline. In your house, when things are going well, everybody is quiet. There's a steady rhythm. And in our trust and safety system as well, our greatest victories are the crises that never happen because a foresighted-driven system caught them and it was in a quiet phase.

She references Lao Tzu: "A leader works best when people barely know that he exists. When his work is done, his aim is fulfilled, and people might just say 'we did it ourselves,' which leads to confidence in the way they're operating."

"Our goal often for the user is to feel powerful, feel safe, even if they never see the millions of lines of code which actually made that possible."

This invisibility creates a challenge. How do you build organizational support for work that succeeds by preventing events nobody sees?

Snigdha's answer is to shift the conversation from prevention to enablement. "You often have to think about empowerment over fear."

The leaders building this infrastructure now won't receive immediate recognition. But over time, the organizations that got this right will be obvious. They'll be the ones that earned enough credibility to become default infrastructure. The ones regulators trust. The ones users depend on. The ones that survived repeated waves of technological change because they built something more durable than the latest model.

The wind makes the kite fly. The string keeps it aloft.

Our Suggestions to Read

Discover The Leaders Shaping India's Business Landscape.

Founders & Innovators

Anuradha Maheshwari: Programming Law for the Age of Intelligence

Anuradha Maheshwari explores how intellectual property law, AI, data privacy, and innovation are reshaping ownership, creativity, and accountability. Through her work at Lex Mantis, she examines why legal systems must evolve with technology, protect human creativity, simplify access to rights, and balance innovation with ethics, empathy, and institutional trust.

Read Full Story

Corporate Visionaries

Ruchi Tushir and the Business of Trusted Judgment

Ruchi Tushir explores how AI, healthtech, legal technology, and enterprise intelligence are reshaping decision-making in regulated industries. Drawing from leadership roles across Microsoft, SAP, EY, and Wolters Kluwer, she examines trust, workflow integration, scalable leadership, data interpretation, and why judgment, reliability, and contextual intelligence increasingly determine long-term business value.

Read Full Story

Corporate Visionaries

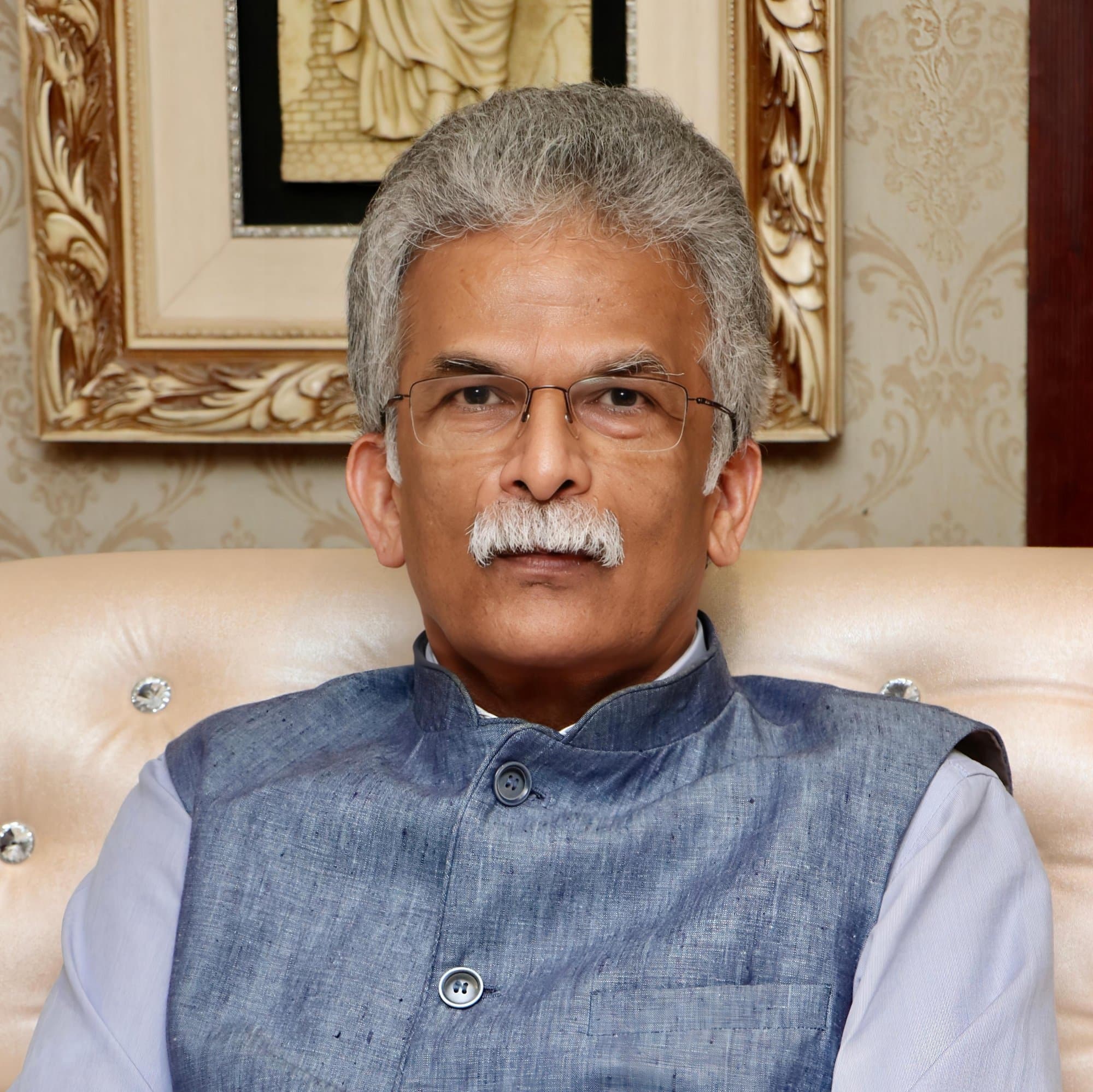

Pradeep Dhobale and the Hard Work of Building Serious Enterprises

Pradeep Dhobale’s leadership journey across ITC, Hyderabad Angels, and sustainability-led investing reveals how governance, capital allocation, institutional trust, and operational discipline shape resilient businesses. The article explores startup ecosystems, founder evaluation, green entrepreneurship, and why long-term competitiveness increasingly depends on systems thinking, responsible leadership, and sustainability embedded into business economics.

Read Full Story