Category: Founders & Innovators

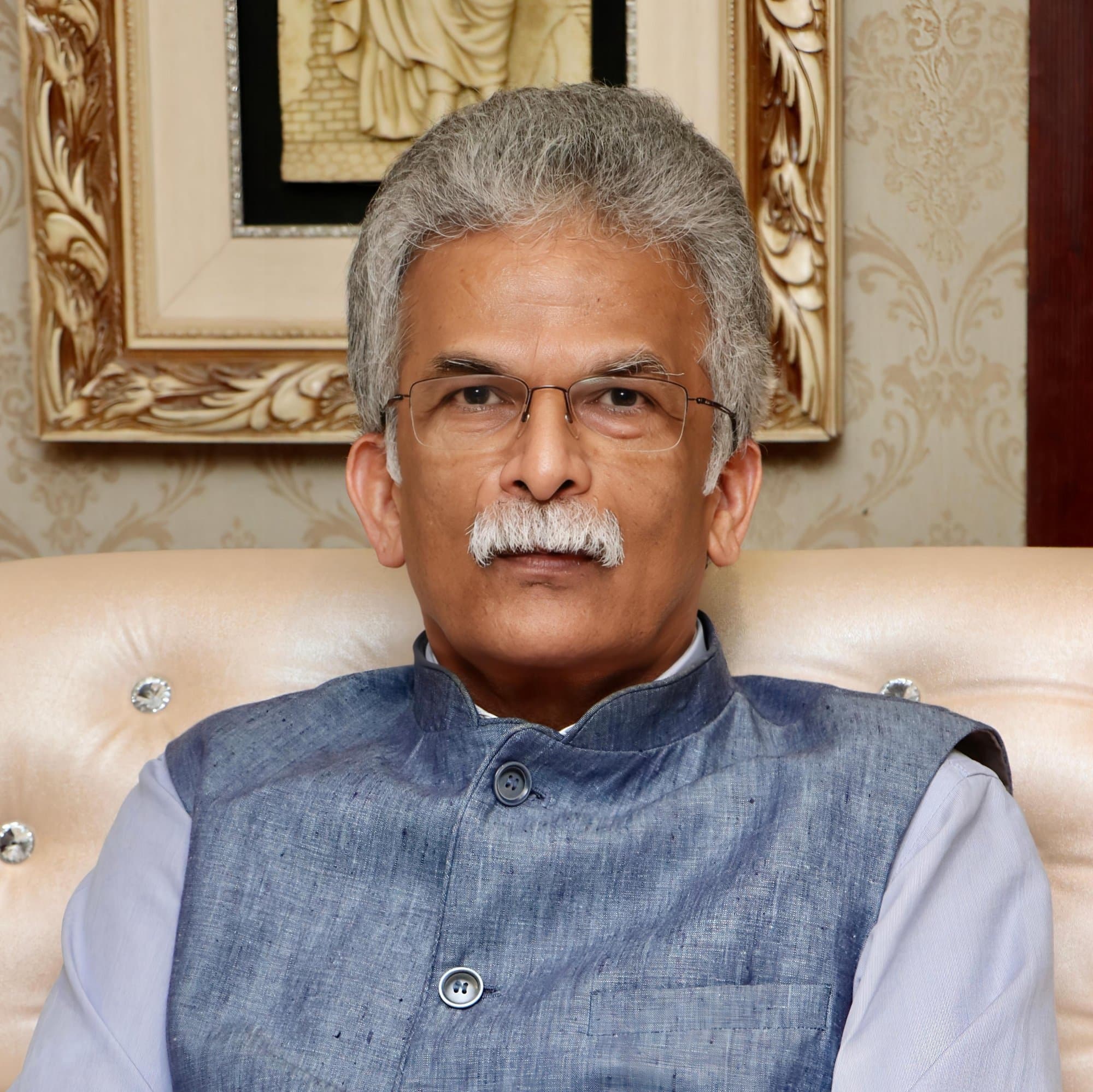

When Intelligence Outruns Judgment: Dr Brindha Jeyaraman on the Leadership Test AI Is Forcing

As AI accelerates, intelligence is scaling faster than the judgment needed to contain it. Dr. Brindha Jeyaraman argues that the real leadership test is no longer technical capability, but institutional maturity: whether organizations can embed governance into design, preserve human judgment, and deploy AI without creating hidden fragility. In a world eager to move fast, the advantage will belong to those who build systems that can be trusted at scale.

Artificial intelligence has created a strange asymmetry inside modern institutions. The people building it often move faster than the systems meant to contain it, the people buying it often understand its promise better than its implications, and the people leading organizations are now being asked to make decisions about intelligence they did not grow up managing. That is why the AI conversation has become much larger than technology. It is now a question of judgment, institutional design, and leadership under conditions of accelerating uncertainty.

Few leaders understand this transition as intimately as Dr Brindha Jeyaraman. She has spent years across engineering, enterprise AI, regulated systems, and governance, which gives her a perspective that is still rare in the field. She knows how intelligent systems are built, how they behave when they move into real operating environments, and how quickly technical capability can outpace managerial seriousness. At a moment when every sector wants AI and very few institutions have fully understood what it means to live with it responsibly, her work carries significance far beyond her title.

For Brindha, this concern comes from lived professional progression rather than abstract theory. Over nearly two decades, she moved from building software systems to shaping AI adoption, and then into the harder question of how intelligence should be governed once it begins to influence customer outcomes, institutional decisions, and the risk posture of highly sensitive environments. More recently, after leading AI governance in a major banking institution, she stepped into entrepreneurship with Aethryx, a venture focused on governance infrastructure for enterprise and agentic AI systems. The move feels consistent with the line of thinking she has been developing for years. The central question has remained steady: how do institutions use intelligence at scale without weakening themselves in the process?

What makes Brindha compelling is the seriousness of her lens. She speaks about AI through systems, incentives, exposure, operating discipline, and human judgment. In her view, governance belongs inside design, accountability, deployment logic, and organizational behavior. That is what gives her work wider relevance. She is speaking to a much larger leadership issue: whether organizations can introduce intelligence into their operating core without creating fragility where they expected advantage.

When Curiosity Arrives Before Demand

Brindha entered this field long before AI became the strategic vocabulary of every board and leadership offsite. She began in software engineering in 2007, when AI still carried the texture of a specialist interest. It had research appeal and technical intrigue, but very little of the market certainty that would later bring investors, executives, and entire product organizations into the space with urgency.

Yet even then, certain ideas kept pulling her back. During her student years, a neural networks module stayed with her more powerfully than the standard computing subjects around it.

“It kept stinging me. There should be more to this.”

That sentence says a great deal about her. Brindha tends to move toward the unresolved question, toward the area where the logic still feels unfinished and where the category itself has not fully settled. She followed that instinct into advanced study in Knowledge Engineering in Singapore, and later into doctoral work in AI focused on temporal knowledge graphs, graph neural networks, financial intelligence, and credit risk. These details matter, though only in the right proportion. They show rigor. They also show continuity. She stayed with the field long enough for the market to eventually move toward the questions she had already chosen.

Over the years, her work stretched across engineering, prediction systems, analytics environments, AI platforms, regulatory institutions, enterprise cloud programs, and GenAI adoption across sectors. She also wrote extensively, including books on machine learning, streaming systems, observability in finance, and generative AI for finance. Teaching and speaking became part of that progression as well. The pattern is clear. She kept deepening her understanding while staying close to execution.

The way she describes that path is striking for its practicality.

If I’m going to spend that much effort learning something, I want it to be in the area that genuinely interests me. Then it doesn’t become stress.

That orientation shaped her leadership style. Interest, in her case, sharpened discipline. She took the day job seriously while building depth in a field that had not yet become commercially obvious. By the time AI became central to enterprise strategy, she had already accumulated something many organizations were only beginning to realize they needed: technical range, operating experience, and the ability to think about intelligence as part of a larger institutional system.

The Point Where Capability Meets Consequence

One reason Brindha’s perspective feels unusually valuable is that she has lived on both sides of the AI curve. She has seen the field as a builder, and she has seen it as a leader responsible for what happens after systems enter institutional use.

That distinction matters more than many executives realize.

In many companies, AI is still discussed through the language of capability. What can it automate. How much time can it save. Which workflow can be accelerated. Which team should deploy it first. Those are valid questions. They are also incomplete in sectors where error carries real cost and where trust is tied directly to operations, reputation, and regulatory confidence.

Banks, healthcare systems, regulators, and other high-accountability environments force a different kind of seriousness. There, a system does not become credible simply because it performs well in a controlled test or creates a compelling demonstration. It has to survive real-world complexity, cross-functional scrutiny, data sensitivity, auditability, escalation logic, and reputational exposure. A GenAI assistant can appear highly effective in a pilot and still create serious institutional risk once it touches customer interaction, internal decision support, or sensitive data flows.

Brindha speaks about this with the calm clarity of someone who has seen the gap from inside.

“Usually the challenge is people feel governance is a showstopper or a brake.”

That observation goes straight to the core tension in enterprise AI. Governance often enters too late. Teams innovate, momentum builds, commercial pressure intensifies, and governance arrives at the final stage to examine a system whose assumptions have already hardened. At that point, every control feels like delay. Every question feels like interference.

Brindha’s answer is structurally different.

You don’t have to wait till the final mile. You can incorporate governance principles in the design phase and architecture phase itself.

This is one of her strongest leadership ideas. Governance by design is not a compliance slogan. It is an operating philosophy. It moves trust into the infrastructure of the system. It shapes products before weak assumptions solidify into expensive problems. Over time, this improves launch readiness, reduces rework, sharpens coordination between product, engineering, risk, and business teams, and creates a stronger path from experimentation to institutional use.

The business wisdom here is straightforward. Strong governance supports speed because it reduces fragility. Weak governance does not eliminate delay. It relocates delay into later and more expensive forms: redesign, approval bottlenecks, internal distrust, regulatory discomfort, and scaled deployments that leadership teams never fully trust.

The Gap Between Policy and Practice

Brindha is especially sharp when she talks about the confusion that still dominates the field. Many organizations collapse laws, principles, policies, frameworks, and operational execution into one broad conversation and then wonder why implementation remains weak.

There is a lot of confusion between laws, policies, frameworks, and operationalization.

This matters because each of those layers performs a different role. Laws define external obligation. Policies express institutional commitment. Principles identify values. Frameworks organize decisions. Operationalization determines whether any of it actually changes how a system is built, monitored, used, escalated, and controlled inside production environments.

This is where many governance efforts become performative. Institutions can write documents. They can publish standards. They can run review committees. Yet none of that guarantees that the control logic has entered the workflow, the product, the access model, the monitoring system, or the user behavior surrounding the technology.

Brindha’s background gives her the ability to move past that surface layer. She understands that different AI systems create different types of exposure, and that serious institutions need the discipline to classify those differences clearly. A content-generation use case raises one set of questions. A customer-facing assistant raises another. An agentic system with broader autonomy requires a deeper conversation around authority, oversight, traceability, and intervention.

This is why risk tiering matters. Classification matters. Reusable controls matter. Institutions that can distinguish use cases intelligently will move with greater confidence because they are applying proportionate governance rather than improvising every time a new initiative appears.

Brindha’s thinking here is deeply practical. She speaks about foundational controls, reusable controls, and then more specific controls for particular contexts. That is a mature enterprise instinct. If an organization has to renegotiate trust from scratch every time a new workflow appears, the institution is still immature. Mature institutions create an internal operating logic that can travel across use cases without losing coherence.

The Human Cost Hidden Inside Convenience

A profile of Brindha would remain incomplete if it stayed inside governance mechanics, because one of her most important contributions sits at a deeper cultural level. She is asking what these technologies are doing to human judgment itself.

“The silent surrendering to these technologies is what concerns me.”

That phrase stays with you because it describes something subtle and increasingly common. Surrender rarely feels dramatic while it is happening. It feels efficient. It feels productive. It feels sensible. Students use AI to finish faster. Young professionals use it to structure work faster. Teams use it to summarize, rewrite, brainstorm, and accelerate output. Each choice appears rational in isolation. Over time, though, a quieter shift begins. The discipline of thinking, wrestling, synthesizing, and solving starts to thin out.

Brindha feels this especially sharply, including in her own life as a mother. She is not romanticizing struggle for its own sake. She is pointing to the developmental value of effort, repetition, delayed understanding, and slow problem-solving.

If you can’t get it, it’s okay. That failure is okay. You try again. That process is important.

There is a deeper leadership lesson here. Technology leaders are now shaping the environments in which younger employees learn how to think, work, and build confidence. If every difficult stage is softened too early by tools that handle the structuring and drafting for them, the short-term productivity gain may conceal a long-term capability loss that institutions will only discover years later.

This concern gives Brindha’s thinking unusual breadth. She is not only asking whether a system works. She is asking what kinds of professionals, teams, and institutional habits are being formed around its use. That question belongs firmly within the domain of tech leadership.

Pressure Reveals the Quality of Judgment

Brindha understands performance pressure, ambitious targets, and the expectation that leaders will move quickly while still delivering confidence. She does not speak as though governance happens in an idealized moral vacuum.

Commercial pressure is always there. But what you can’t compromise is pushing something harmful into production just to meet that pressure.

That is the line where leadership becomes visible. In decisions. In timing. In what gets approved. In what gets slowed down. In what gets redesigned even when internal momentum is pushing the other way.

This is also why her move into building governance infrastructure through her own venture feels timely. The AI market has energy, tools, funding, and abundant promise. What it still lacks, especially in enterprise and regulated environments, is operational seriousness. The organizations that matter most over the next decade may well be the ones that create strong enough foundations to keep judgment intact as intelligence becomes more deeply embedded in institutional life.

Brindha’s leadership sits exactly in that space. She is not chasing hype. She is trying to make the field structurally usable.

What the Next Five Years Will Demand

Brindha sees governance becoming more embedded, more adaptive, and much closer to live operations than it is today. The strongest systems will not exist as static control documents or ceremonial review layers. They will classify risk dynamically, observe behavior continuously, make accountability more visible, and connect technical controls much more directly with institutional decision-making.

And five years from now, what will the most sophisticated AI governance systems look like that we can barely imagine today?

Her thinking points in a clear direction. They will be far more infrastructural. They will sit inside active systems. They will understand different categories of use cases. They will adjust with context. They will make oversight part of the operating environment itself.

That shift will matter because the next chapter of AI will be less about access and more about absorption. The tools are already here. The real differentiator will be whether institutions know how to live with them well.

Leadership Lessons

The most valuable technology leaders often commit to a field before the market validates it.

Curiosity becomes a strategic asset when it is matched by execution discipline.

AI leadership is increasingly about institutional maturity.

Governance creates the most value when it enters at design stage.

Different AI systems require different levels of scrutiny. Classification is a leadership discipline.

Laws, principles, frameworks, and implementation are different layers and should never be confused.

Reusable controls create scale because they reduce reinvention and improve confidence.

Technical fluency matters in governance because abstract rules cannot operationalize themselves.

Weak control often creates delay later in far more expensive parts of the organization.

Leaders should pay close attention to what cognitive habits AI is strengthening and what habits it is quietly replacing.

Commercial pressure reveals the quality of judgment more clearly than strategy decks ever will.

The future belongs to institutions that can absorb intelligence while keeping trust intact.

Brindha’s relevance comes from the kind of leadership she represents. She has spent enough time close to systems to understand complexity without being intimidated by it, and enough time inside institutions to know that technology succeeds only when judgment, discipline, and operating trust rise with it. That combination is still rare. Many leaders can speak the language of innovation. Far fewer can translate innovation into something an institution can actually live with, scale with, and remain accountable for.

That is what makes her work resonate beyond her own domain. She is part of a generation of technology leaders being asked to do more than introduce new capability. They are being asked to shape how institutions think, decide, and behave when intelligence becomes part of everyday operations. In that sense, the real leadership challenge is larger than AI itself. It is about whether organizations can become wiser as they become more powerful.

Our Suggestions to Read

Discover The Leaders Shaping India's Business Landscape.

Founders & Innovators

Anuradha Maheshwari: Programming Law for the Age of Intelligence

Anuradha Maheshwari explores how intellectual property law, AI, data privacy, and innovation are reshaping ownership, creativity, and accountability. Through her work at Lex Mantis, she examines why legal systems must evolve with technology, protect human creativity, simplify access to rights, and balance innovation with ethics, empathy, and institutional trust.

Read Full Story

Corporate Visionaries

Ruchi Tushir and the Business of Trusted Judgment

Ruchi Tushir explores how AI, healthtech, legal technology, and enterprise intelligence are reshaping decision-making in regulated industries. Drawing from leadership roles across Microsoft, SAP, EY, and Wolters Kluwer, she examines trust, workflow integration, scalable leadership, data interpretation, and why judgment, reliability, and contextual intelligence increasingly determine long-term business value.

Read Full Story

Corporate Visionaries

Pradeep Dhobale and the Hard Work of Building Serious Enterprises

Pradeep Dhobale’s leadership journey across ITC, Hyderabad Angels, and sustainability-led investing reveals how governance, capital allocation, institutional trust, and operational discipline shape resilient businesses. The article explores startup ecosystems, founder evaluation, green entrepreneurship, and why long-term competitiveness increasingly depends on systems thinking, responsible leadership, and sustainability embedded into business economics.

Read Full Story